图文详解Windows 8.0上Eclipse 4.4.0 配置CentOS 6.5 上的Hadoop2.2.0开发环境,给需要的朋友参考学习。

Eclipse的Hadoop插件下载地址:https://github.com/winghc/hadoop2x-eclipse-plugin

将下载的压缩包解压,将hadoop-eclipse-kepler-plugin-2.2.0这个jar包扔到eclipse下面的dropins目录下,重启eclipse即可

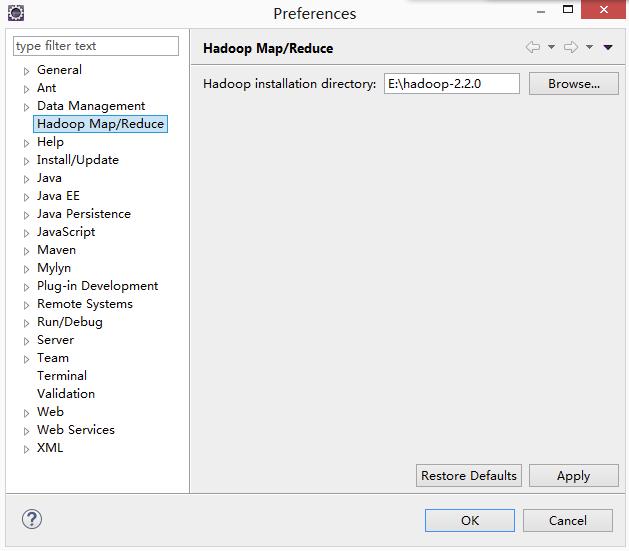

这里面的hadoop installation directory并不是你windows上装的hadoop目录,而仅仅是你在centos上编译好的源码,在windows上的解压路径而已,该路径仅仅是用于在创建MapReduce Project能从这个地方自动引入MapReduce所需要的jar

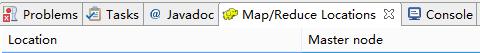

进入Window-->Open Perspective-->other-->Map/Reduce打开Map/Reduce窗口

找到

右击选择,New Hadoop location,这个时候会出现

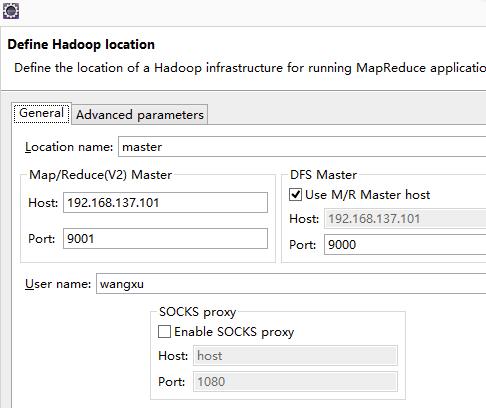

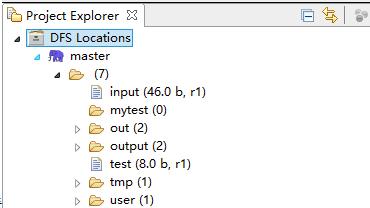

Map/Reduce(V2)中的配置对应于mapred-site.xml中的端口配置,DFS Master中的配置对应于core-site.xml中的端口配置,配置完成之后finish即可,这个时候可以查看

测试,新建一个MapReduce项目

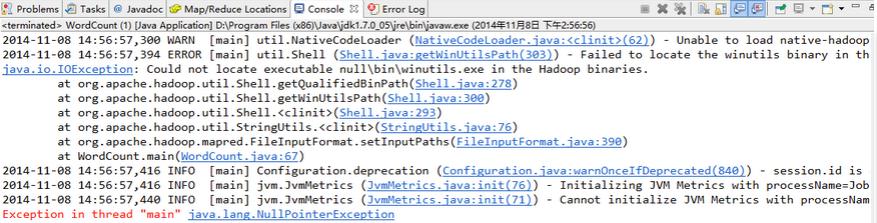

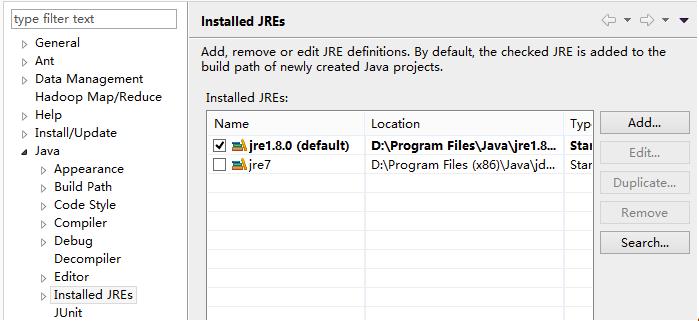

要解决这个问题,你必须要完成如下几个步骤,在windows上配置HADOOP_HOME,然后将%HADOOP_HOME%\bin加入到path之中,然后去https://github.com/srccodes/hadoop-common-2.2.0-bin下载一个,下载之后将这个bin目录里面的东西全部拷贝到你自己windows上的HADOOP的bin目录下,覆盖即可,同时把hadoop.dll加到C盘下的system32中,如果这些都完成之后还是碰到:Exception in thread "main" java.lang.UnsatisfiedLinkError: org.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Ljava/lang/String;I)Z,那么就检查一下你的JDK,有可能是32位的JDK导致的,需要下载64位JDK安装,并且在eclipse将jre环境配置为你新安装的64位JRE环境

如我的jre1.8是64位,jre7是32位,如果这里面没有,你直接add即可,选中你的64位jre环境之后,就会出现了。

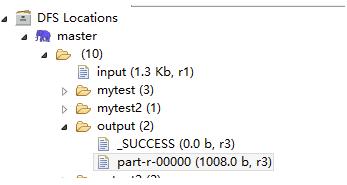

之后写个wordcount程序测试一下,贴出我的代码如下,前提是你已经在hdfs上建好了input文件,并且在里面放些内容

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class WordCount {

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one);

}

}

}

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

public static void main(String[] args) throws Exception {

// System.setProperty("hadoop.home.dir", "E:\\hadoop2.2\\");

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

// if (otherArgs.length != 2) {

// System.err.println("Usage: wordcount <in> <out>");

// System.exit(2);

// }

Job job = new Job(conf, "word count");

job.setJarByClass(WordCount.class);

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(IntSumReducer.class);

job.setReducerClass(IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path("hdfs://master:9000/input"));

FileOutputFormat.setOutputPath(job, new Path("hdfs://master:9000/output"));

boolean flag = job.waitForCompletion(true);

System.out.print("SUCCEED!" + flag);

System.exit(flag ? 0 : 1);

System.out.println();

}

}

注:以上图片上传到红联Linux系统教程频道中。